[ Behind the Scenes ]

Rethinking how viewers find what they love through conversational intelligence

Quickplay X Google Cloud

ROLE

Lead Product Designer

TIMELINE

August - September 2023

TEAM

SKILLS

4 Designers

Product Strategy & Design

Prototyping

Motion Design

OVERVIEW

Quickplay Media is the industry leader in delivering next generation OTT solutions. It’s latest collaboration with Google Cloud, as partners aimed to harness the power of generative AI to create new opportunities for user engagement, discovery and monetization for the media and entertainment industry.

I led a team of 4 to design a conversational, context-aware AI layer to reimagine discovery to be showcased at IBC 2023 (International Broadcasting Convention) in Amsterdam.

This exploration helped define a forward roadmap for Quickplay’s next-gen AI experience.

PROBLEMS

Viewers struggle to discover the content they feel like watching

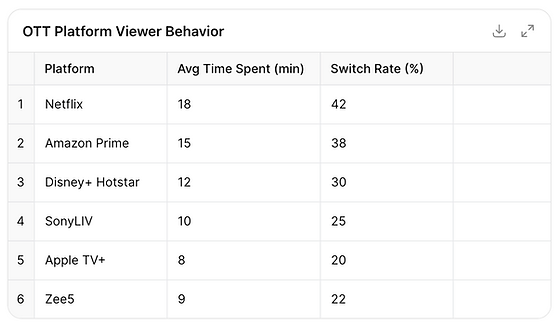

An average of 12–18 minutes is spent in just deciding what to watch nearly as long as a short episode. Up to 40% abandon or switch shows within the first 10 minutes.

Decision Paralysis

The vastness of OTT catalogs overwhelms rather than empowers and results in the users scrolling endlessly

Fragmented Context

Discovery is built around content not context. Platforms rarely consider the user’s mood, time, or intent like “I want something light before bed”

“Black box” suggestions

Most users don’t trust or understand why something is being recommended

Group Viewing Dynamics

When watching with others, preferences clash — couples or families often abandon the process entirely because there’s no mechanism to collaboratively narrow choices.

DISCOVERY

Understanding today’s OTT discovery experience

We conducted an in-depth exploration of the OTT landscape, examining how recommendation systems function across regions, emerging discovery trends, and shifting user expectations. To ground our understanding, we mapped our own frustrations across multiple platforms, analyzed user discussions on Reddit to surface authentic pain points, and interviewed 12 viewers to understand how they choose what to watch.

OPPORTUNITY

Move discovery from algorithm push to conversational pull

Intention-based suggestions

Instead of passively pushing titles based on historical data, the system engages users in a dialogue to understand why they want to watch something. It learns intent, mood, and context, shaping discovery around the user’s current state rather than their past behavior.

NLP models can classify intent based on tone cues rather than genre labels. For example Instead of matching keywords like “funny” or “comedy”, the system parses tone and modifiers.

-

“Something funny but smart” → satire or dark humor

-

“Something light to relax” → situational or feel-good comedies

-

“Something chaotic and stupid funny” → slapstick or sketch-based content

-

“Something nostalgic and comforting” → rewatchable favorites or familiar sitcoms.

Beyond understanding one-time intent, the system adapts dynamically to learn from subtle cues such as skips, replays, or completion rates.

CONCEPT EXPLORATION

Guiding Design principle

Set the Scene

Recommendations often miss situational nuance. Through natural speech, context-aware voice discovery allows real-time context reshaping.

AI detects:

horror (genre intent) + with my kid (context constraint)

Thematic Pathways

Typical genres flatten emotional depth. For instance “comedy” can mean sharp satire for one person and goofy action for another.

Users explore “witty satire,” “offbeat adventures,” or “comfort humor,” shaped dynamically by their watch history and collective viewer reactions.

Smart Schedule Sync

The AI syncs with the user’s calendar to understand available viewing windows. Based on this, it recommends content that fits the moment: from quick watch sessions to finishing an in-progress show, boosting consistency and reducing content drop-offs.

Community Curations

Crowd-sourced micro-curations — viewer-made lists built around moods, events, or trends. AI curates and ranks these community lists while surfacing top-rated moods and themes.

Users follow curators or moods they connect with or create custom watchlists to share

Reason Tags in Cards

Most OTT platforms tell you what to watch, reason tags tell you why. Each recommendation comes with a short, AI-generated tag that explains the match based on tone, theme, and context.

For Example: “Since you’ve been watching light thrillers at night, this one balances suspense with calm pacing.”

or “Viewers who enjoyed sarcastic dialogue and strong female leads rated this 4.8⭐.”

From Debates to Discovery

This feature uses a playful, voice-enabled quiz to help couples find common ground in what to watch. By combining both users’ watch histories, genre preferences, and quiz responses, the AI identifies overlapping themes and tonal preferences to suggest shows that satisfy both.

FEEDBACK

The biggest learning was that voice needs a visible body language.

Silence became one of the most critical yet overlooked elements. Users interpreted unmarked pauses as lag or error.

Users trusted the AI more when it reacted immediately, even before answering — small acknowledgments like “Got it” or a visual flicker reassured them that input was received.

CONSIDERATIONS

Designing for Silence

AI thinking needs to be seen, not just heard — subtle animations, micro-movements, or ambient light shifts can visually express cognitive states like “thinking” or “searching”, making waiting feel intentional rather than awkward.

MEASURABLE IMPACT